Approximately 13 billion laboratory tests are administered every year in the United States, but not every result is timely or accurate. Laboratory missteps prevent patients from receiving appropriate, necessary, and sometimes lifesaving care. These medical errors are the third-leading cause of death in the nation.

To help reverse this trend, a research team from the MIT Department of Aeronautics and Astronautics (AeroAstro) Engineering Systems Lab and Synensys, a safety management contractor, examined the ecosystem of diagnostic laboratory data. Their findings, including six systemic factors contributing to patient hazards in laboratory diagnostics tests, offer a rare holistic view of this complex network — not just doctors and lab technicians, but also device manufacturers, health information technology (HIT) providers, and even government entities such as the White House. By viewing the diagnostic laboratory data ecosystem as an integrated system, an approach based on systems theory, the MIT researchers have identified specific changes that can lead to safer behaviors for health care workers and healthier outcomes for patients.

A report of the study, which was conducted by AeroAstro Professor Nancy Leveson, who serves as head of the System Safety and Cybersecurity group, along with Research Engineer John Thomas and graduate students Polly Harrington and Rodrigo Rose, was submitted to the U.S. Food and Drug Administration this past fall. Improving the infrastructure of laboratory data has been a priority for the FDA, who contracted the study through Synensis.

Hundreds of hazards, six causes

In a yearlong study that included more than 50 interviews, the Leveson team found the diagnostic laboratory data ecosystem to be vast yet fractured. No one understood how the whole system functioned or the totality of substandard treatment patients received. Well-intentioned workers were being influenced by the system to carry out unsafe actions, MIT engineers wrote.

Test results sent to the wrong patients, incompatible technologies that strain information sharing between the doctor and lab technician, and specimens transported to the lab without guarantees of temperature control were just some of the hundreds of hazards the MIT engineers identified. The sheer volume of potential risks, known as unsafe control actions (UCAs), should not dissuade health care stakeholders from seeking change, Harrington says.

“While there are hundreds of UCAs, there are only six systemic factors that are causing these hazards,” she adds. “Using a system-based methodology, the medical community can address many of these issues with one swoop.”

Four of the systemic factors — decentralization, flawed communication and coordination, insufficient focus on safety-related regulations, and ambiguous or outdated standards — reflect the need for greater oversight and accountability. The two remaining systemic factors — misperceived notions of risk and lack of systems theory integration — call for a fundamental shift in perspective and operations. For instance, the medical community, including doctors themselves, tends to blame physicians when errors occur. Understanding the real risk levels associated with laboratory data and HIT might prompt more action for change, the report’s authors wrote.

“There’s this expectation that doctors will catch every error,” Harrington says. “It’s unreasonable and unfair to expect that, especially when they have no reason to assume the data they're getting is flawed.”

Think like an engineer

Systems theory may be a new concept to the medical community, but the aviation industry has used it for decades.

“After World War II, there were so many commercial aviation crashes that the public was scared to fly,” says Leveson, a leading expert in system and software safety. In the early 2000s, she developed the System-Theoretic Process Analysis (STPA), a technique based on systems theory that offers insights into how complex systems can become safer. Researchers used STPA in its report to the FDA. “Industry and government worked together to put controls and error reporting in place. Today, there are nearly zero crashes in the U.S. What’s happening in health care right now is like having a Boeing 787 crash every day.”

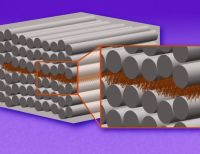

Other engineering principles that work well in aviation, such as control systems, could be applied to health care as well, Thomas says. For instance, closed-loop controls solicit feedback so a system can change and adapt. Having laboratories confirm that physicians received their patients’ test results or investigating all reports of diagnostic errors are examples of closed-loop controls that are not mandated in the current ecosystem, Thomas says.

“Operating without controls is like asking a robot to navigate a city street blindfolded,” Thomas says. “There’s no opportunity for course correction. Closed-loop controls help inform future decision-making, and, at this point in time, it’s missing in the U.S. health-care system.”

The Leveson team will continue working with Synensys on behalf of the FDA. Their next study will investigate diagnostic screenings outside the laboratory, such as at a physician’s office (point of care) or at home (over the counter). Since the start of the Covid-19 pandemic, nonclinical lab testing has surged in the country. About 600 million Covid-19 tests were sent to U.S. households between January and September 2022, according to Synensys. Yet, few systems are in place to aggregate these data or report findings to public health agencies.

“There’s a lot of well-meaning people trying to solve this and other lab data challenges,” Rose says. “If we can convince people to think of health care as an engineered system, we can go a long way in solving some of these entrenched problems.”

The Synensys research contract is art of the Systemic Harmonization and Interoperability Enhancement for Laboratory Data (SHIELD) campaign, an agency initiative that seeks assistance and input in using systems theory to address this challenge.